Reasoned Next Steps on AI Matters

CarefulAI and Citadel AI Collaboration

Situation:

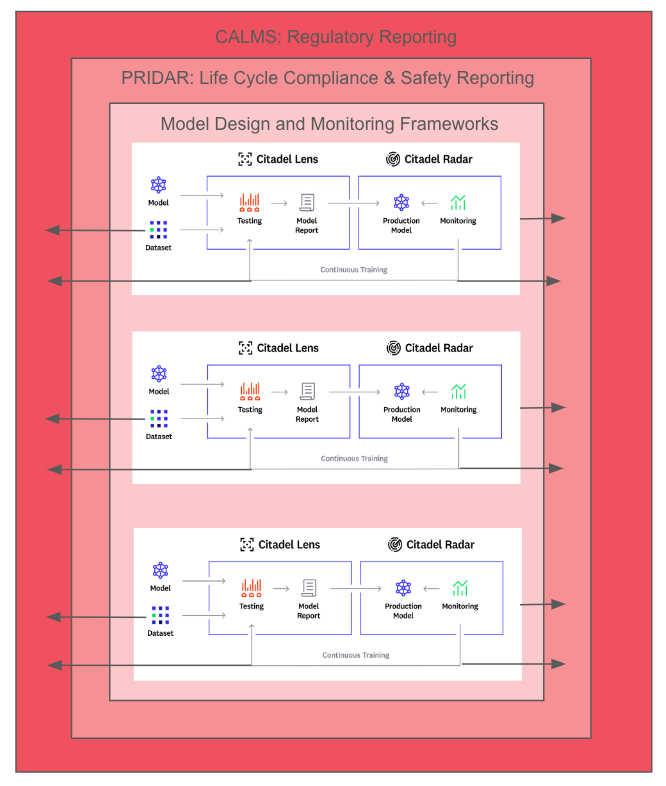

Our vision is to enable reliable, trustworthy generative AI by unifying leading-edge assurance techniques into an integrated platform/method tailored for key generative systems.

CarefulAI (experts in validating life cycles, data sets and alignment) and CitadelAI (experts in continuous model testing) came together during an InnovateUK bid for assurance of autonomous generative models in the 45 countries represented by the UREKA Network.

Our approach is innovative as it will merge CarefulAI's CLAMS/PRIDAR approach for regulatory reporting of GenAI (e.g. to the EU AI Act, NIST AI RMF or ISO 42001) with the Citadel AI approach to technically testing GenAI Model testing (LangCheck).

The aim being to provide a direct flow of compliance information from model design and monitoring, through to standards, and onto regulatory frameworks so all those who have a role in AI Assurance (CEO's, buyers, commissioners, Operational staff and users) can have visibility of all the models that affect their lives.

This will add value to both firms operations. The problems we will address, implications associated with these problems and next steps we will progress are shown below

Problems

- Data sharing: for the collaboration to work model users (governments, businesses and academia) would need to be transparent about model training data sets and model monitoring feedback, and the proposed business impact of groups of models (e.g. in line with ISO 4200, BS 30440, NIST and EU AI Act Guidelines)

- Complexity of Integration: Merging distinct tools and methodologies requires that CarefulAI and Citadel AI harmonise technical and operational protocols.

- Scope of Assurance: Assuring safety and performance in diverse AI systems like GANs, language models, and deep reinforcement learners involves addressing a wide range of potential risks and biases that are not static

- Continuous Evolution: Generative AI technologies are rapidly evolving, necessitating that any assurance platform be adaptable and scalable to future advancements

Implications:

- Integration complexities will be managed, to maintain a fit for purpose assurance process.

- Stakeholder involvement will be wide to manage biases, alignment and safety concerns across 45 countries

- We will consider single and multi-modal assurance to ensure long-term utility and impact.

Next Steps:

-

- For those AI developers, users, and regulators: Stay informed about the progress and capabilities of this unified platform to ensure it aligns with current and future needs.

- For specifiers AI safety experts, ethical AI committees: Provide continuous feedback on the evolving needs of AI safety to ensure the open platform remains relevant and effective.

- For funders investors, governmental bodies: Support continuous research and development efforts to ensure the platform can adapt to new challenges and technologies in the generative AI field

LLM Safety and ISO Standards

Situation:

Aligning Large LLMs to meet high-quality, ethical, and safety benchmarks and standards.

Problems:

- User Alignment Testing, guided by ISO 9241-11 and ISO/IEC 25010, might uncover discrepancies in usability and quality, potentially indicating a need for model refinement.

- Safety Testing, with reference to standards like ISO/IEC 27001 and ISO/IEC 29119, is critical for identifying biases, privacy issues, and security vulnerabilities. Inadequate testing can lead to serious ethical and legal repercussions.

- The challenge in interpreting and implementing these ISO standards can lead to inconsistencies in testing methodologies and outcomes.

- For Emerging Standards like ISO/IEC DTR 42001 and IEEE P7003, the dynamic nature of development and updates necessitates continuous adaptation and learning.

Implications:

- If User Alignment Testing isn't rigorously conducted following the ISO standards, the model might not fully align with user needs, leading to lower satisfaction and adoption rates.

- Inadequate Safety Testing, according to the ISO standards, could result in the model generating biased or harmful outputs, compromising user privacy, and being vulnerable to attacks.

- Misalignment or misunderstanding of ISO standards can lead to ineffective testing processes, potentially leaving significant model flaws undetected.

- Failure to adapt to Emerging Standards could result in the model being outdated or not meeting future legal and ethical requirements.

Next Steps:

- For those passively affected (users and stakeholders): Regularly provide feedback based on user experience to help refine the model according to ISO standards.

- For enablers (developers, testers, and quality assurance teams): Ensure thorough understanding and application of relevant ISO standards in testing processes; continuously update testing methods according to the latest standards, and consider emerging safety approaches e.g. CALM

- For those who specify the steps (regulatory bodies, AI ethics boards): Clarify and communicate the application of ISO standards for LLMs; keep track of and integrate emerging standards into regulatory frameworks.

- For funders (organizations, investors): Invest in training and resources to enable adherence to these standards, and support ongoing adaptation to emerging ISO standards.

Situation:

Aligning Large LLMs to meet high-quality, ethical, and safety benchmarks and standards.

Problems:

- User Alignment Testing, guided by ISO 9241-11 and ISO/IEC 25010, might uncover discrepancies in usability and quality, potentially indicating a need for model refinement.

- Safety Testing, with reference to standards like ISO/IEC 27001 and ISO/IEC 29119, is critical for identifying biases, privacy issues, and security vulnerabilities. Inadequate testing can lead to serious ethical and legal repercussions.

- The challenge in interpreting and implementing these ISO standards can lead to inconsistencies in testing methodologies and outcomes.

- For Emerging Standards like ISO/IEC DTR 42001 and IEEE P7003, the dynamic nature of development and updates necessitates continuous adaptation and learning.

Implications:

- If User Alignment Testing isn't rigorously conducted following the ISO standards, the model might not fully align with user needs, leading to lower satisfaction and adoption rates.

- Inadequate Safety Testing, according to the ISO standards, could result in the model generating biased or harmful outputs, compromising user privacy, and being vulnerable to attacks.

- Misalignment or misunderstanding of ISO standards can lead to ineffective testing processes, potentially leaving significant model flaws undetected.

- Failure to adapt to Emerging Standards could result in the model being outdated or not meeting future legal and ethical requirements.

Next Steps:

- For those passively affected (users and stakeholders): Regularly provide feedback based on user experience to help refine the model according to ISO standards.

- For enablers (developers, testers, and quality assurance teams): Ensure thorough understanding and application of relevant ISO standards in testing processes; continuously update testing methods according to the latest standards, and consider emerging safety approaches e.g. CALM

- For those who specify the steps (regulatory bodies, AI ethics boards): Clarify and communicate the application of ISO standards for LLMs; keep track of and integrate emerging standards into regulatory frameworks.

- For funders (organizations, investors): Invest in training and resources to enable adherence to these standards, and support ongoing adaptation to emerging ISO standards.

Next Steps in Algorithmic Impact Assessments

Situation:

Algorithmic Impact Assessments (AIAs) have emerged as a specialized tool for assessing the risks and impacts of automated decision systems, particularly in the public sector. In Canada, AIAs are mandated under the Canadian Treasury Board’s Directive on Automated Decision-Making for government departments. This contrasts with the private sector, where AIAs are not a formal requirement but are increasingly recognized as important for ethical AI use.

Problems:

1. Lack of Mandate in Private Sector: Unlike in the public sector, there is no formal requirement for AIAs in the private sector, leading to potential inconsistency in how AI risks are managed across industries.

2. Distinctive Approach: AIAs differ from other auditing methods in their comprehensive approach to evaluating impacts on rights, privacy, health, and economic interests. This distinctiveness may lead to challenges in integrating AIAs with existing risk assessment frameworks in organizations that are unfamiliar with their methodology.

3. Ethical and Social Implications: Failure to conduct AIAs can result in overlooking ethical and social implications of AI systems, leading to potential biases, privacy breaches, and mistrust among stakeholders.

Implications:

1. Risk of Unethical AI Practices: Without the widespread adoption of AIAs, especially in the private sector, there's a risk of perpetuating unethical AI practices.

2. Regulatory and Compliance Challenges: Organizations might face future regulatory and compliance challenges if they don’t align their AI practices with emerging standards like AIAs.

3. Public Trust and Credibility: For public sector entities, failing to adhere to AIA requirements could lead to loss of public trust and questions about the credibility and fairness of automated decision-making processes.

Next Steps:

1. Adoption in the Private Sector: Encourage private sector entities to adopt AIAs voluntarily to ensure ethical AI use, with potential guidance from industry leaders or regulatory bodies.

2. Awareness and Education: Increase awareness and provide education about the benefits and methodologies of AIAs, differentiating them from other auditing methods.

3. Policy Development: Policymakers should consider extending the mandate of AIAs or similar assessments to the private sector, ensuring a standardized approach to AI risk management.

4. Cross-Sector Collaboration: Foster collaboration between public and private sectors to share best practices and insights related to AIAs, enhancing the overall understanding and application of these assessments.

Next Steps in Private LLM Tuning

Situation:

Implementing Supervised Fine-Tuning (SFT) and Reinforcement Learning from Human Feedback (RLHF) for AI model training is complex, involving multiple software tools and processes

Problems:

1. Complexity in SFT:

- SFT demands accurate data collection and annotation, using tools like Labelbox or Prodigy.

- Machine Learning (ML) frameworks like TensorFlow, PyTorch, and Keras are essential for building, training, and evaluating AI models.

- Managing versions of datasets and models with tools like DVC or Git-LFS is crucial for reproducibility.

2. Challenges in RLHF:

- RLHF requires initial model training and generation of response samples for human review.

- Human feedback is collected through interfaces, necessitating an understandable and user-friendly design.

- Reward models are created from human feedback, using specialized software or scripts, to guide the AI's learning process.

- Continuous evaluation and iteration are essential, often involving platforms like Weights & Biases for experiment tracking.

Implications:

1. Need for Specialized Software:

- SFT and RLHF require a blend of advanced ML frameworks, data annotation, and reward modeling software.

- Cloud computing platforms like AWS, Google Cloud, and Azure provide necessary computational power.

2. Resource Intensiveness:

- These training methods are resource-heavy, demanding computational resources, expert knowledge, and significant human input.

- Continuous monitoring, evaluation, and iteration are key, making the process time-consuming and complex.

Next Steps:

- For AI Researchers and Developers:

- Utilize a range of software tools like TensorFlow, PyTorch, OpenAI Gym, and cloud platforms for effective SFT and RLHF implementation.

- Stay updated with advancements in AI training methodologies.

- For Project Managers:

- Allocate adequate resources, including computational power and expert personnel.

- Plan for the complexities and iterative nature of these training methods.

- For Training Teams:

- Ensure team members are skilled in the latest AI training tools and methodologies.

- Focus on continuous improvement and learning in AI model training techniques.

- For Funding Bodies:

- Recognize the resource-intensive nature of advanced AI model training.

- Provide support for projects that push the boundaries of AI training technologies.

Situation:

Implementing Supervised Fine-Tuning (SFT) and Reinforcement Learning from Human Feedback (RLHF) for AI model training is complex, involving multiple software tools and processes

Problems:

1. Complexity in SFT:

- SFT demands accurate data collection and annotation, using tools like Labelbox or Prodigy.

- Machine Learning (ML) frameworks like TensorFlow, PyTorch, and Keras are essential for building, training, and evaluating AI models.

- Managing versions of datasets and models with tools like DVC or Git-LFS is crucial for reproducibility.

2. Challenges in RLHF:

- RLHF requires initial model training and generation of response samples for human review.

- Human feedback is collected through interfaces, necessitating an understandable and user-friendly design.

- Reward models are created from human feedback, using specialized software or scripts, to guide the AI's learning process.

- Continuous evaluation and iteration are essential, often involving platforms like Weights & Biases for experiment tracking.

Implications:

1. Need for Specialized Software:

- SFT and RLHF require a blend of advanced ML frameworks, data annotation, and reward modeling software.

- Cloud computing platforms like AWS, Google Cloud, and Azure provide necessary computational power.

2. Resource Intensiveness:

- These training methods are resource-heavy, demanding computational resources, expert knowledge, and significant human input.

- Continuous monitoring, evaluation, and iteration are key, making the process time-consuming and complex.

Next Steps:

- For AI Researchers and Developers:

- Utilize a range of software tools like TensorFlow, PyTorch, OpenAI Gym, and cloud platforms for effective SFT and RLHF implementation.

- Stay updated with advancements in AI training methodologies.

- For Project Managers:

- Allocate adequate resources, including computational power and expert personnel.

- Plan for the complexities and iterative nature of these training methods.

- For Training Teams:

- Ensure team members are skilled in the latest AI training tools and methodologies.

- Focus on continuous improvement and learning in AI model training techniques.

- For Funding Bodies:

- Recognize the resource-intensive nature of advanced AI model training.

- Provide support for projects that push the boundaries of AI training technologies.

LLM Alignment Next Steps

Situation:

LLaMA-2 suite development represents a significant advancement in open-source large language models (LLMs), nearing the performance of proprietary models like ChatGPT and GPT-4.

Problems:

1. Data Quality and Quantity: Impact of training data quality and volume on LLM performance.

2. Alignment Process: Challenges in aligning open-source models with proprietary model processes.

Implications:

1. Improved Data Handling: Enhanced model performance due to better data quality and quantity.

2. Increased Transparency: Adoption of complex processes like SFT and RLHF in LLaMA-2 enhances transparency in LLMs.

Next Steps:

- Researchers: Investigate enhanced data handling and alignment processes' implications on LLM development.

- Developers: Explore integration of these approaches in new LLM projects.

- Funding Bodies: Support projects emphasising data quality and advanced alignment processes.

Situation:

LLaMA-2 suite development represents a significant advancement in open-source large language models (LLMs), nearing the performance of proprietary models like ChatGPT and GPT-4.

Problems:

1. Data Quality and Quantity: Impact of training data quality and volume on LLM performance.

2. Alignment Process: Challenges in aligning open-source models with proprietary model processes.

Implications:

1. Improved Data Handling: Enhanced model performance due to better data quality and quantity.

2. Increased Transparency: Adoption of complex processes like SFT and RLHF in LLaMA-2 enhances transparency in LLMs.

Next Steps:

- Researchers: Investigate enhanced data handling and alignment processes' implications on LLM development.

- Developers: Explore integration of these approaches in new LLM projects.

- Funding Bodies: Support projects emphasising data quality and advanced alignment processes.