Research for the BS30440 Evidence Framework: V1.05, October 11 2023

Conduced in support of the BSI Work Group: BSI CH304/-/1

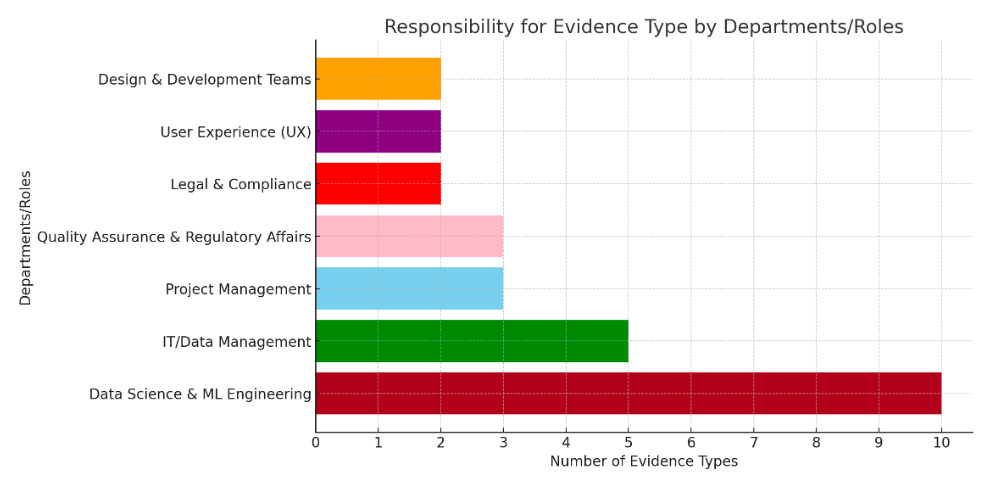

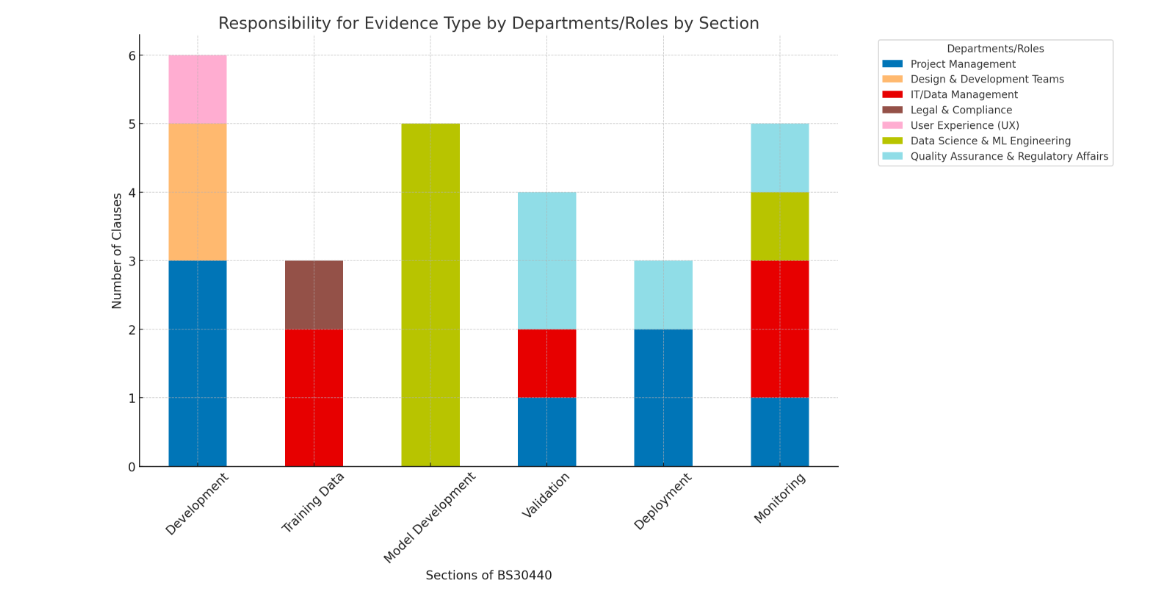

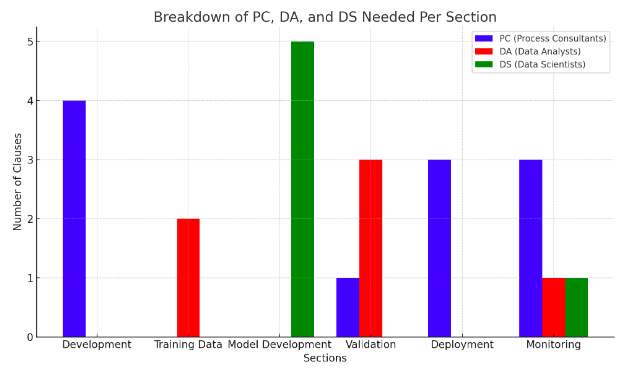

Key for Auditor Type

PC = Process Consultant: BSc or Higher

DA = Data Analyst. Qualifications: BSc in IT or Computer Science

DS = Data Scientist. Qualification: MSc or Higher in Computer Science

PC = Process Consultant: BSc or Higher

DA = Data Analyst. Qualifications: BSc in IT or Computer Science

DS = Data Scientist. Qualification: MSc or Higher in Computer Science

Conclusions

The evidence to comply with BS 30440 mirrors UK, EU and US Regulators requirements for increased 'Good Machine learning Practice' evidence relating to safe and ethical data science practice.

To comply with BS30440 and similar AI product standards (e.g EU AI Act, BS 42001, 23894 et al) suppliers will need to pull together more data science evidence that they would need to comply with other lifecycle standards (e.g. the BS 140** series, ISO 13485 etc).

This will require governance and audit staff to develop high level data science resources and review tools.

The evidence to comply with BS 30440 mirrors UK, EU and US Regulators requirements for increased 'Good Machine learning Practice' evidence relating to safe and ethical data science practice.

To comply with BS30440 and similar AI product standards (e.g EU AI Act, BS 42001, 23894 et al) suppliers will need to pull together more data science evidence that they would need to comply with other lifecycle standards (e.g. the BS 140** series, ISO 13485 etc).

This will require governance and audit staff to develop high level data science resources and review tools.

Proposed Evidence Framework for the BSI Work Group: BSI CH304/-/1

BS 30440 SECTIONS

INCEPTION

PC 4.1 The supplier shall evidence the product addresses a healthcare need:

Evidence Form 1: Literature review documenting published research on the healthcare need and existing solutions. Includes data on disease prevalence, quality of life impact, economic burden, etc.

Evidence Form 2: Survey results, focus group transcripts, or interview notes with patients, providers, and other stakeholders affirming the need. Shows agreement on key pain points.

Evidence Form 3: Project background report tying together the objective research and subjective stakeholder input. Makes the case for how the product will address current unmet needs.

DEVELOPMENT

PC 5.1.1 The supplier shall include relevant stakeholders (including representative and diverse groups of users of and those affected by the product) in the development of the product.

Evidence Form 1: Meeting sign-in sheets demonstrating participation of diverse stakeholders in design workshops and feedback sessions. Includes stakeholder names/credentials and demographic data.

Evidence Form 2: Email communications with stakeholders showing how their input was incorporated into product requirements and design specifications.

Evidence Form 3: Development plan mapping stakeholder engagement touchpoints to major milestones and deliverables.

PC 5.1.2 The supplier shall document how stakeholders were identified and provide a comprehensive record of all stakeholder engagement during development of the product.

Evidence Form 1: Stakeholder analysis report detailing the methodology for identifying and selecting relevant groups for consultation.

Evidence Form 2: Centralised database logging all stakeholder interactions, the feedback received, and the product changes made in response.

Evidence Form 3: Narrative report summarising high-level themes from stakeholder involvement, insights gained, and influence on product direction. Includes supporting data extracts.

PC 5.1.3 The supplier shall provide evidence that the development of the product reflects good practice standards for usability.

Evidence Form 1: Usability test plans and results from sessions with representative end users to evaluate product interfaces and workflows. Should demonstrate conformance to human factors standards.

Evidence Form 2: Heuristic analysis reports assessing the product against established usability principles and guidelines. Documents major findings and severity levels.

Evidence Form 3: Summative usability report tying together findings from usability testing and heuristic analyses. Maps issues to solutions implemented in final product. Confirms compliance with usability engineering good practices.

PC 5.1.4 The supplier shall document stakeholder proposals and how they have been acted upon to demonstrate the informed development of the product (e.g. evidenced by action logs from user engagement, highlighting specific instances where the outcome was influenced through this process).

Evidence Form 1: Stakeholder engagement database with fields for: Date and method of engagement, Stakeholders involved, Feedback/suggestions provided, Product changes implemented in response, Date of implementation

Evidence Form 2: Excerpts from developer sprint notes showing examples where stakeholder perspectives guided implementation priorities and design choices for specific product features.

Evidence Form 3: Stakeholder contribution reports detailing major feature areas where engagement had significant impact on requirements, interactions, workflows, etc. Maps feedback to related product capabilities. Quantifies stakeholder influence.

DA 5.2 Training data

Evidence Form 1: Data flow diagram tracing training data from origin sources through any transfers or transformations, clearly documenting responsible parties at each stage.

Evidence Form 2: Training data inventory with metadata on all data elements used, including collection method, structure, content type, geographic/temporal context, subpopulations represented, and other relevant attributes.

Evidence Form 3: Legal and ethical frameworks overview detailing guidelines followed for data access, usage rights, subject consent collection, onward transfer, and storage. Confirms conformance to jurisdiction requirements.

DA 5.2.1 Data flow

Evidence Form 1: End-to-end data flow diagrams tracing the full lineage of training data from origin sources to ultimate usage. Includes all data transfers, transformations, and responsible parties.

Evidence Form 2: Standardized data transit forms cataloging sender, receiver, dates, data types/formats, usage rights, and governing ethics approvals for any transfer or transformation. Provides granular chain of custody.

Evidence Form 3: Master data log registering all data elements introduced at any stage with metadata on origin, characteristics, processing, and final disposition. Enables audit tracing for specific data package or elements.

DA 5.2.2 Ethics and governance

Evidence Form 1: Copies of research ethics approvals from relevant oversight bodies confirming submitted protocols adhere to ethical collection, informed consent, privacy protections and subject rights standards.

Evidence Form 2: Standardised checklist assessing new data against principles of relevance, consent, proportionality and security prior to usage in model development. Ensures consistent application of ethical frameworks.

Evidence Form 3: Data licensing agreements between involved institutions specifying usage terms, restrictions on allowable transforms/mergers, mandatory safeguards for sensitive attributes, and data handling audit provisions.

DS 5.2.3 Collection procedures

Evidence Form 1: Metadata inventory cataloging key attributes of data collection methods for each element including instruments, formats, platforms, responsible parties and protocols referenced. Enables auditing of provenance.

Evidence Form 2: Data collection oversight report summarising eyewitness observation audits of selected collection workflows to ensure alignment with documented responsible protocols.

Evidence Form 3: Data collector qualifications register detailing roles, required competencies, training completion status and measured proficiency evaluations per individual authorised to collect training data elements under sanctioned protocols.

DS 5.2.4 Attributes

Evidence Form 1: Dataset summaries cataloging key statistics including timeframe, geography, demographic subgroup representations, extent of missing data, and other salient descriptive attributes, analysed separately for training, validation and testing data partitions.

Evidence Form 2: Data labelling process documentation detailing protocols, tools, human labeller qualifications, oversight methods, and measured accuracy/inter-rater reliability metrics demonstrating reliability and trustworthiness of label assignments for supervised learning approaches with manually tagged observations.

Evidence Form 3: Model input data dictionaries explicitly enumerating the specific data variables ingested by model development environments, traced directly to their source collection protocols and legal usages rights.

DS 5.2.5 Handling

Evidence Form 1: Data storage and transmission security plan detailing technical safeguards, access controls, encryption requirements, transmission protocols, backup and redundancy provisions across data infrastructures spanning collection, intermediate processing, and model development environments.

Evidence Form 2: Data handling standards compliance checklist mapping implemented controls and organisational policies to relevant jurisdiction, industry, or institutional information security regulations and guidelines. Provides auditable confirmation of adherence.

Evidence Form 3: Secure data workflow diagrams analysing end-to-end infrastructure vulnerabilities, mapping data types to security functions, explicitly documenting protections in place through each processing, storage, transmission and analytics stage as training data traverses enterprise environments between source and usage.

DS 5.3 Model development

Evidence Form 1: Model development methodology technical report detailing all data pre-processing, algorithm and architecture selection rationales, training run configurations, parameter optimisation sequences, performance evaluation increments, infrastructure resources leveraged, and technical development team credentials applied in creating candidate model versions.

Evidence Form 2: Model version lineage diagram tracing the descended genealogy of model variants from initial prototypes through interim evaluation-informed refinements to selection of final candidate models using version control nomenclature and linking antecessor connections.

Evidence Form 3: Model training data sample sets and output result sets provided to enable external reconstruction and verification of reported performance claims regarding predictive accuracy, robustness, and comparisons against alternative approaches using independently controlled evaluation methods.

DS 5.3.1.1 The supplier shall document the proposed interaction between end-users and the product, which could include "human‐in‐command", "human‐on‐the‐loop", "human‐in‐the‐loop".

Evidence Form 1: User interface workflow diagrams illustrating intended sequences of user decisions, system behaviors, data flows, and explanatory outputs across manual and automated stages according to explicitly designated function allocations between human and machine capabilities.

Evidence Form 2: Cognitive task analysis report enumerating required situational assessments, option identification, predictive inferences, goal prioritizations, information integration demands, and types of system transparency necessary at key decision gates where human judgment satisfies ethical, legal or performance requirements exceeding system proficiencies.

Evidence Form 3: Applied human factors engineering artifacts including screen layouts, interaction paradigms, input response mappings, and user guides embodying and reinforcing the foundational model of joint human-AI teaming during design implementations.

DS 5.3.1.2 Suppliers shall document their bias in the stages of model design, development, and deployment by evidencing what they see as a model's risk profile versus alternatives. The description shall conform to good practice reporting guidelines.

Evidence Form 1: Algorithmic auditing report assessing discriminatory, unfair, opaque, hacked/gamed or otherwise insufficiently robust outcomes that could emerge from reliance on the model, mapped to mitigations.

Evidence Form 2: Comparative error proclivity analyses quantifying false positive and false negative likelihoods across key subpopulations contrasting model against available solutions to establish relative risk profiles.

Evidence Form 3: Research ethics pre-screen memorandum reviewing proposed development methodology through lenses of representative stakeholder concerns, institutional review protocols, and technical accountability standards governing sensitive model purposes.

DS 5.3.1.3 The supplier shall document the methods used to develop the AI model(s) in the product. The description shall follow good practice reporting guidelines.

Evidence Form 1: Model development methodology technical report detailing all data pre-processing, algorithm and architecture selection rationales, training run configurations, parameter optimisation sequences, performance evaluation increments, infrastructure resources leveraged, and technical development team credentials applied in creating candidate model versions.

Evidence Form 2: Encapsulated software environment installation package with dedicated configurations to wholly reproduce runtime build dependencies, code packages, data loaders, architecture specifications and hyper parameters enabling reconstruction of an identical model genesis environment by external auditors.

Evidence Form 3: Model training lineage activity log capturing ordered time-sequenced entries of executed run profiles with hardware configurations, data and random number seeds, output metrics and code versioning stamps enabling chronological tracing of incremental development.

DS 5.3.1.4 The supplier shall document which training data elements/variables were used to develop the model [assuming that it is not all of the data originally collected (see 5.2.4)]. Where data are excluded, the supplier shall document the process and rationale for removal.

Evidence Form 1: Model input data dictionary enumerating the specific structured, unstructured and derived types mapped to each authorised raw and processed variable consumed with complete definitions of values/content, encoding standards, and allowable operations.

Evidence Form 2: Input data summarisation report profiling combined aggregated statistics across numerical, categorical, text, image and time-series elements from the unified assembly of training set variables included versus those collected originally per data Inventory.

Evidence Form 3: Input data selection methodology exposition describing any differential filtering, dimensionality reduction, or complete variable removal operations applied to the collected data inventory when deriving the subset of features deliberately fed to model training algorithms alongside technical justifications.

DS 5.3.2.1 Input variable data flow

Evidence Form 1: End-to-end data flow diagrams tracing lineages of all input data variables from origin sources through upstream collection, partitioning, storage, retrieval and staging phases into final model development environments.

Evidence Form 2: Master input data inventories with metadata detailing protected statuses, allowable usages, governing approvals, and applicable constraints following each atomic data element through successive transformations across full life cycles from raw capture through model ingestion.

Evidence Form 3: Signed data licensing agreements between external contributing organisations and model development entity authorizing specific data elements for narrowly defined modelling usage under strict privacy, sensitivity and ethical protections as detailed in contract clauses.

DS 5.3.2.2 Outcome variables

Evidence Form 1: Output data dictionary defining each allowable variable the model is designed to predict, recommend, classify or otherwise produce, specifying precise technical definitions, possible values with semantics, evaluation metrics, and usage constraints.

Evidence Form 2: Model output validation dossiers demonstrating empirically measured alignment between each output variable and its corresponding authentic healthcare constructs within target application context, rooting predictions securely to genuine needs and outcomes.

Evidence Form 3: Semantic ontologies formally specifying relationships between output and input variables grounded in knowledge representations codifying the theoretical, experimental, and experiential foundations authorising inferred linkages.

DS 5.3.2.2.1 If the model has output variables related and/or relevant to a medical outcome, the supplier shall document the output variables.

Evidence Form 1: Output data dictionary defining any predicted diagnoses, recommended treatments, triaged referral levels, prescribed interventions, or other clinical decisions produced by the model with precise specifications and usage constraints.

Evidence Form 2: Validation dossiers demonstrating measured model output alignments with authentic medical outcomes within applicable clinical contexts, preventing deviations into irrelevant or unreliable variables.

Evidence Form 3: Clinical care protocols delineating the allowable medical decisions and caregiver workflows for the target applications, establishing lexical and semantic grounds for constraining output variables to relevant, useful, and safe healthcare outcomes.

DS 5.3.2.2.2 The supplier shall provide evidence of the validity of the output variable(s) to the healthcare need identified in Clause 4.

Evidence Form 1: Clinical relevance consultations capturing external subject matter expert feedback assessing output variables for plausibility, significance, and usefulness in addressing authentic medical needs within intended care contexts.

Evidence Form 2: Quantitative variable-need alignment analyses statistically demonstrating strengths of relationship between output predictions and prioritised healthcare gaps cited in background needs analysis captured in Clause 4 substantiating product inception.

Evidence Form 3: Model output evidence provenance diagrams linking validators, evaluation methods, validation datasets, performance metrics, and results 1) demonstrating clinical applicability of outputs and 2) tracing outputs backward along data lineages to original need.

DS 5.3.2.2.3 If surrogate or proxy output variable(s) for the healthcare need are used, the supplier shall provide evidence of the strength of association of the surrogate to the target outcome.

Evidence Form 1: Predictive causality analysis quantitatively establishing strength of empirical association between surrogate outputs and ultimate healthcare outcomes of interest, grounded in statistical correlations measured within applicable domain contexts.

Evidence Form 2: Literature review citing published medical evidence linking selected surrogate endpoints to gold standard clinical decision variables across ranges of related contexts, providing theoretical anchors for model outputs lacking direct validation data.

Evidence Form 3: Expert judgement documentation eliciting external evaluations from qualified subject matter authorities regarding plausibility and feasibility for using proposed surrogate proxies based on clinical experience and medical knowledge.

DS 5.3.3.1 The supplier shall document the AI/ML algorithm(s) used in the model (e.g. name of learning algorithm, model architecture and code packages, where appropriate)

Evidence Form 1: Model development methodology technical report providing precise names and version numbers of all commercial, open-source, proprietary or custom-developed machine learning libraries, packages, toolkits, frameworks, and extensions forming algorithms applied during model genesis workflows.

Evidence Form 2: Mathematical specification sheets formally defining the theoretical foundations, equation derivations, computational logics, optimization procedures and probabilistic semantics underpinning the essential mechanisms of learning, generalization and inference encoded within adopted algorithmic techniques.

Evidence Form 3: Encapsulated software environment installation package with dedicated configurations to wholly reproduce runtime build dependencies, code packages and library versions enabling reconstruction of an identical algorithmic training harness by external auditors.

DS 5.3.3.2 The supplier shall document and justify the process of handling missing data, if applicable.

Evidence Form 1: Missing data analysis quantification report profiling all dimensions of incomplete observations within training data including concentrations, patterns, variable correlations, demographic skews, and hypothesised causal factors behind absences.

Evidence Form 2: Data imputation methodology exposition technically detailing all mathematical formulas, data substitutions, simulations, generation methods and theoretical assumptions instituted in pre-processing steps to prepare incomplete training data for model consumption.

Evidence Form 3: Model training run validation reviews assessing downstream impacts of missing data treatments by comparatively evaluating pipeline iterations using deletion, imputation and trimmed datasets to optimise overall integrity.

DS 5.3.3.3 The supplier shall document and justify the sample size used to train the model.

Evidence Form 1: Statistical power analysis scientifically calculating minimum required training observations across stratified output variable categories and input feature distributions formally needed to technically support performance claims within quantified confidence bounds.

Evidence Form 2: Training set assembly methodology narrative contextualizing incremental construction decisions to augment initial core samples with additional target environment-specific expansions according to mixture modeling, bootstrap aggregation and multi-phase reinforcement learnings from interim evaluation epochs.

Evidence Form 3: Budgetary specification sheets detailing all fixed infrastructure expenditures, compute service charges, and estimated labor costs for algorithm tuning and model iteration encompassed within total resourcing levels formally provisioned and approved for model development.

DS 5.3.3.4 The supplier shall document how independent training data sets and testing data sets (i.e. holdout data sets) were separated in model development.

Evidence Form 1: Data handling protocols formally delineating authorised uses, restrictive bindings, and segmentation rules applied to subdivide aggregated training data corpus into disjoint isolated sets for specialised model development phases.

Evidence Form 2: Data partitioning conformity audit validating correct assignment and restrictions of individual data elements into training, evaluation and holdout sets per established data handling protocol specifications.

Evidence Form 3: Chained cryptographic custody ledgers timestamping one-way hashes of training data partitions as they were constructed, signed and transmitted into isolated development environments, irrefutably attesting separation without compromising privacy.

DS 5.3.3.5 If used as part of model development, the supplier shall document the following: a) data pre-processing procedure(s); b) hyper parameter selection, optimisation and thresholding; and c) internal validation procedure(s).

Evidence Form 1: Model tuning methodology technical report detailing all data normalisation, feature selection, weighting adjustments, algorithm hyper parameter optimisations, configuration profiling, cross-validations, and evaluation sequencing conducted during training harness refinement.

Evidence Form 2: Model training provenance diagrams tracing indexed model version lineages across iterative tuning cycles back to parent configurations, luck seeds, sub-training sets, and performance benchmarks aggressively evolved towards improved generalisation.

Evidence Form 3: Encapsulated software environment installation package with dedicated configurations to wholly reproduce runtime build dependencies, code packages, data loaders, and hyper parameter settings enabling reconstruction of incremental training by external auditors.

DS 5.3.4.1 The supplier shall document the process of constructing the final model of the product, where relevant.

Evidence Form 1: Model selection methodology technical brief detailing the rating criteria, performance benchmarks, qualification protocols, and evaluative procedures applied to rank candidate models produced during the training pipeline and select the best fitting final model.

Evidence Form 2: Model user test reports validating robustness, consistency and stability of the final model across multiple predictive simulation runs using stratified sampling from full variational scope of expected inputs after training cessation.

Evidence Form 3: Model lineage diagram tracing the descended genealogy of model variants from initial prototypes through interim evaluation-informed refinements to the selection declaration of the overall highest performing survivor as the final model.

DS 5.3.4.2 The supplier shall document and justify the use of any decision thresholds that convert probabilities into discrete outputs in the final model.

Evidence Form 1: Decision threshold report detailing the rationale for selecting specific thresholds, including statistical analyses and impact assessments on model outputs.

Evidence Form 2: Sensitivity analysis results demonstrating the effects of varying decision thresholds on model performance, accuracy, and reliability.

Evidence Form 3: User feedback and expert opinions correlating decision threshold levels with practical and clinical relevance in real-world applications.

DS 5.3.5 The supplier shall evaluate the final model with a holdout data set, i.e., one that has not been used in training the model.

Evidence Form 1: Independent validation report using a holdout dataset, including detailed performance metrics (e.g., accuracy, sensitivity, specificity).

Evidence Form 2: Comparative analysis showing the model's performance on training versus holdout datasets to demonstrate the model’s generalisability.

Evidence Form 3: Documentation of external peer review or audit of the model testing process and outcomes by independent experts.

DS 5.3.5.2 The supplier shall document the performance of the product using appropriate metrics for the proposed task using testing data.

Evidence Form 1: Comprehensive performance metrics report including accuracy, sensitivity, specificity, positive and negative predictive values, calibration, and area under the curve (AUC).

Evidence Form 2: Graphical representations (e.g., ROC curves, calibration plots) visually demonstrating the model’s performance across various metrics.

Evidence Form 3: Comparative performance analysis against established benchmarks or similar models in the field, providing contextual understanding of the metrics.

DS 5.3.5.3 The supplier shall document and justify the choice of performance and safety metrics used as part of the model testing.

Evidence Form 1: Justification report outlining the rationale for selecting specific performance and safety metrics, including relevance to clinical outcomes and user needs.

Evidence Form 2: Stakeholder (e.g., clinicians, patients) feedback reports on the perceived importance and relevance of chosen metrics.

Evidence Form 3: Literature review or expert consensus documents supporting the use of the chosen metrics for the specific healthcare application.

DS 5.3.5.4 The supplier shall document exploration for bias undertaken in model training and testing and document any bias mitigation strategies introduced.

Evidence Form 1: Bias assessment report detailing findings from analysis of model performance across diverse demographic groups.

Evidence Form 2: Documentation of bias mitigation strategies employed during model training and testing, including algorithmic adjustments or dataset diversification.

Evidence Form 3: Post-deployment performance reports highlighting ongoing monitoring of bias and effectiveness of implemented mitigation strategies.

DS 5.3.6 The supplier shall document the nature and sources of data used in the testing of the final model.

Evidence Form 1: Comprehensive data inventory listing all datasets used in testing, including sources, nature, and volume of data.

Evidence Form 2: Data provenance and processing logs detailing the journey of each dataset from acquisition to testing.

Evidence Form 3: Ethical compliance certificates or reports for data use, ensuring adherence to privacy laws and consent guidelines.

DS 5.3.6.1 The supplier shall ensure and document that the data used for testing is representative of the intended use environment.

Evidence Form 1: Representativeness analysis report comparing testing data characteristics with those of the intended use environment.

Evidence Form 2: Expert review or consensus statement on the appropriateness and representativeness of the testing data.

Evidence Form 3: Statistical analyses demonstrating the alignment of testing data demographics, conditions, and scenarios with real-world settings.

DS 5.3.7 The supplier shall evaluate and document the performance of the model in the intended use environment.

Evidence Form 1: Field performance report detailing model efficacy, accuracy, and reliability in the actual use environment

.Evidence Form 2: User feedback and case studies demonstrating the model's practical impact in real-world healthcare settings.

Evidence Form 3: Continuous performance monitoring logs showing the model’s adaptability and stability over time in the use environment.

DS 5.4.1 The supplier shall ensure and document that the model outputs are clinically relevant and meaningful.

Evidence Form 1: Clinical relevance report establishing the significance of model outputs in healthcare decision-making.

Evidence Form 2: Feedback from healthcare professionals on the practical utility and clinical applicability of the model outputs.

Evidence Form 3: Case studies or clinical trials demonstrating how model outputs have impacted patient care and outcomes.

DS 5.4.2 The supplier shall ensure that the outputs of the model are transparent and interpretable to users.

Evidence Form 1: User interface screenshots or demos showing clear, understandable model outputs.

Evidence Form 2: User training and documentation materials explaining the interpretation of model outputs.

Evidence Form 3: Feedback from users (e.g., clinicians) on their experiences and challenges in understanding and using model outputs.

DS 5.4.3 The supplier shall provide training to users to ensure effective and safe use of the product.

Evidence Form 1: Training module outlines and content, tailored to different user groups.

Evidence Form 2: User competency assessments and feedback on the training program.

Evidence Form 3: Post-training evaluation reports demonstrating users’ proficiency and confidence in using the product.

DS 5.5 The supplier shall have a documented process for updating the model and managing different versions of the model.

Evidence Form 1: Version control log detailing each update, the reasons for the changes, and the impacts on model performance.

Evidence Form 2: Update protocol documents outlining the process for initiating, testing, and deploying model updates.

Evidence Form 3: Stakeholder communication records, including notifications of updates and feedback on the impacts of these changes.

DS 5.5.1. The supplier shall document how new data and insights are incorporated into model updates.

Evidence Form 1: Data integration reports showing how new datasets or insights are assimilated into the model.

Evidence Form 2: Impact assessment analyses detailing the effects of new data on model performance and decision-making.

Evidence Form 3: Documentation of ethical and legal considerations addressed when integrating new data.

PC 5.5.2 The supplier shall continuously monitor the performance of the model post-deployment and document the findings.

Evidence Form 1: Continuous monitoring reports including performance metrics, user feedback, and incident logs.

Evidence Form 2: Periodic performance review summaries highlighting trends, challenges, and areas for improvement.

Evidence Form 3: Corrective action records demonstrating responses to performance issues or user concerns.

PC 5.6 The supplier shall ensure and document compliance with all relevant regulatory requirements for the product.

Evidence Form 1: Regulatory compliance checklist and certification documents relevant to the product.

Evidence Form 2: Audit reports from regulatory bodies or third-party auditors confirming compliance.

Evidence Form 3: Risk management and mitigation documentation in line with regulatory standards.

PC 5.7 The supplier shall prioritise and document measures taken to ensure the safety of patients and users of the product.

Evidence Form 1: Safety protocol documents outlining measures and guidelines for protecting patient and user safety.

Evidence Form 2: Incident reports and response strategies relating to safety concerns.

Evidence Form 3: Feedback from healthcare professionals and patients on safety aspects of the product.

PC 5.8 The supplier shall document and address ethical considerations relevant to the product, including patient consent and data privacy.

Evidence Form 1: Ethical review reports detailing considerations such as patient consent, data privacy, and fairness.

Evidence Form 2: Consent forms and privacy policies demonstrating adherence to ethical standards.

Evidence Form 3: Stakeholder engagement records discussing ethical implications of the solutions

VALIDATION

PC 6.1 The supplier shall engage with relevant stakeholders throughout the product lifecycle to ensure the product meets user and patient needs.

Evidence Form 1: Stakeholder engagement logs detailing meetings, communications, and feedback throughout the product lifecycle.

Evidence Form 2: Documentation of how stakeholder input has been incorporated into the product design and updates.

Evidence Form 3: Stakeholder satisfaction surveys and reports assessing the alignment of the product with user and patient needs.

DA 6.2 The supplier shall ensure transparency in the development process and be accountable for the product’s performance.

Evidence Form 1: Development process documentation providing detailed insight into the model design, data used, and decision-making processes.

Evidence Form 2: Publicly available product performance reports and accountability statements.

Evidence Form 3: Incident response and rectification records demonstrating accountability in addressing product performance issues.

DS 6.3 The supplier shall commit to continuous learning and improvement of the product based on performance data and user feedback.

Evidence Form 1: Continuous improvement logs showing ongoing updates, enhancements, and modifications based on data and feedback.

Evidence Form 2: User feedback and performance data analysis reports driving product improvements.

Evidence Form 3: Documented reviews and updates of learning protocols and development algorithms in response to emerging data and trends.

DA 6.4 The supplier shall implement robust data governance practices to ensure data quality, security, and compliance with regulations.

Evidence Form 1: Data governance policy documents outlining practices for data quality, security, and compliance.

Evidence Form 2: Audit reports on data management practices verifying adherence to governance standards.

Evidence Form 3: Incident logs related to data breaches or issues, along with response and remediation actions taken.

DA 6.5 The supplier shall conduct comprehensive risk management to identify, assess, and mitigate risks associated with the product.

Evidence Form 1: Risk assessment reports detailing identified risks, impact assessments, and mitigation strategies.

Evidence Form 2: Risk management protocol documentation showing systematic approaches to risk identification and mitigation.

Evidence Form 3: Historical logs of identified risks and the effectiveness of implemented mitigation strategies over time.

PC 6.6 The supplier shall have a clear plan for the product’s end-of-life, including decommissioning and data handling.

Evidence Form 1: End-of-life plan document outlining strategies for decommissioning, data migration, and user transition.

Evidence Form 2: Stakeholder communications on end-of-life plans, ensuring transparency and preparation.

Evidence Form 3: Post-decommissioning report analysing the process, challenges, and lessons learned.

____

DEPLOYMENT

PC 7.1 The supplier shall document the deployment process of the product, ensuring it is consistent with the intended use and user needs.

Evidence Form 1: Deployment plan detailing the steps, timelines, and considerations for rolling out the product.

Evidence Form 2: User training and support materials provided at the time of deployment.

Evidence Form 3: Post-deployment feedback reports from users, confirming the product meets the intended use and user needs.

PC 7.2 The supplier shall establish a system for collecting and addressing user feedback continuously.

Evidence Form 1: User feedback collection system documentation, including methods and frequency of data collection.

Evidence Form 2: Summary reports of user feedback, highlighting key issues and responses.

Evidence Form 3: Change logs showing how user feedback has led to product modifications or improvements.

PC PS 7.3 The supplier shall monitor and document the product's performance in real-world settings, ensuring it aligns with the expected outcomes.

Evidence Form 1: Real-world performance monitoring reports, including data on efficacy, reliability, and user satisfaction.

Evidence Form 2: Comparative analysis of expected versus actual performance metrics in real-world settings.

Evidence Form 3: Case studies or user testimonials illustrating the product's impact in real-world scenarios.

____

MONITORING

PC 8.1 The supplier shall conduct post-market surveillance to monitor the safety and effectiveness of the product after release.

Evidence Form 1: Post-market surveillance plan detailing the methodologies and frequency of monitoring.

Evidence Form 2: Surveillance reports summarizing findings on safety and effectiveness.

Evidence Form 3: Incident reports and corrective actions taken in response to post-market surveillance findings.

PC 8.2 The supplier shall ensure ongoing compliance with evolving regulatory requirements and document any changes made to maintain compliance.

Evidence Form 1: Documentation of regulatory updates and revisions to the product to maintain compliance.

Evidence Form 2: Audit reports from regulatory bodies or third-party auditors verifying ongoing compliance.

Evidence Form 3: Change logs and impact assessments related to compliance updates.

DS 8.3 The supplier shall maintain the integrity and security of data used and generated by the product throughout its lifecycle to decomissioning.

Evidence Form 1: Data security and integrity protocols, including measures for protection against breaches and unauthorised access.

Evidence Form 2: Incident logs related to data security, along with responses and improvements made.

Evidence Form 3: Regular audits or assessments of data management systems to ensure ongoing integrity and security.

INCEPTION

PC 4.1 The supplier shall evidence the product addresses a healthcare need:

Evidence Form 1: Literature review documenting published research on the healthcare need and existing solutions. Includes data on disease prevalence, quality of life impact, economic burden, etc.

Evidence Form 2: Survey results, focus group transcripts, or interview notes with patients, providers, and other stakeholders affirming the need. Shows agreement on key pain points.

Evidence Form 3: Project background report tying together the objective research and subjective stakeholder input. Makes the case for how the product will address current unmet needs.

DEVELOPMENT

PC 5.1.1 The supplier shall include relevant stakeholders (including representative and diverse groups of users of and those affected by the product) in the development of the product.

Evidence Form 1: Meeting sign-in sheets demonstrating participation of diverse stakeholders in design workshops and feedback sessions. Includes stakeholder names/credentials and demographic data.

Evidence Form 2: Email communications with stakeholders showing how their input was incorporated into product requirements and design specifications.

Evidence Form 3: Development plan mapping stakeholder engagement touchpoints to major milestones and deliverables.

PC 5.1.2 The supplier shall document how stakeholders were identified and provide a comprehensive record of all stakeholder engagement during development of the product.

Evidence Form 1: Stakeholder analysis report detailing the methodology for identifying and selecting relevant groups for consultation.

Evidence Form 2: Centralised database logging all stakeholder interactions, the feedback received, and the product changes made in response.

Evidence Form 3: Narrative report summarising high-level themes from stakeholder involvement, insights gained, and influence on product direction. Includes supporting data extracts.

PC 5.1.3 The supplier shall provide evidence that the development of the product reflects good practice standards for usability.

Evidence Form 1: Usability test plans and results from sessions with representative end users to evaluate product interfaces and workflows. Should demonstrate conformance to human factors standards.

Evidence Form 2: Heuristic analysis reports assessing the product against established usability principles and guidelines. Documents major findings and severity levels.

Evidence Form 3: Summative usability report tying together findings from usability testing and heuristic analyses. Maps issues to solutions implemented in final product. Confirms compliance with usability engineering good practices.

PC 5.1.4 The supplier shall document stakeholder proposals and how they have been acted upon to demonstrate the informed development of the product (e.g. evidenced by action logs from user engagement, highlighting specific instances where the outcome was influenced through this process).

Evidence Form 1: Stakeholder engagement database with fields for: Date and method of engagement, Stakeholders involved, Feedback/suggestions provided, Product changes implemented in response, Date of implementation

Evidence Form 2: Excerpts from developer sprint notes showing examples where stakeholder perspectives guided implementation priorities and design choices for specific product features.

Evidence Form 3: Stakeholder contribution reports detailing major feature areas where engagement had significant impact on requirements, interactions, workflows, etc. Maps feedback to related product capabilities. Quantifies stakeholder influence.

DA 5.2 Training data

Evidence Form 1: Data flow diagram tracing training data from origin sources through any transfers or transformations, clearly documenting responsible parties at each stage.

Evidence Form 2: Training data inventory with metadata on all data elements used, including collection method, structure, content type, geographic/temporal context, subpopulations represented, and other relevant attributes.

Evidence Form 3: Legal and ethical frameworks overview detailing guidelines followed for data access, usage rights, subject consent collection, onward transfer, and storage. Confirms conformance to jurisdiction requirements.

DA 5.2.1 Data flow

Evidence Form 1: End-to-end data flow diagrams tracing the full lineage of training data from origin sources to ultimate usage. Includes all data transfers, transformations, and responsible parties.

Evidence Form 2: Standardized data transit forms cataloging sender, receiver, dates, data types/formats, usage rights, and governing ethics approvals for any transfer or transformation. Provides granular chain of custody.

Evidence Form 3: Master data log registering all data elements introduced at any stage with metadata on origin, characteristics, processing, and final disposition. Enables audit tracing for specific data package or elements.

DA 5.2.2 Ethics and governance

Evidence Form 1: Copies of research ethics approvals from relevant oversight bodies confirming submitted protocols adhere to ethical collection, informed consent, privacy protections and subject rights standards.

Evidence Form 2: Standardised checklist assessing new data against principles of relevance, consent, proportionality and security prior to usage in model development. Ensures consistent application of ethical frameworks.

Evidence Form 3: Data licensing agreements between involved institutions specifying usage terms, restrictions on allowable transforms/mergers, mandatory safeguards for sensitive attributes, and data handling audit provisions.

DS 5.2.3 Collection procedures

Evidence Form 1: Metadata inventory cataloging key attributes of data collection methods for each element including instruments, formats, platforms, responsible parties and protocols referenced. Enables auditing of provenance.

Evidence Form 2: Data collection oversight report summarising eyewitness observation audits of selected collection workflows to ensure alignment with documented responsible protocols.

Evidence Form 3: Data collector qualifications register detailing roles, required competencies, training completion status and measured proficiency evaluations per individual authorised to collect training data elements under sanctioned protocols.

DS 5.2.4 Attributes

Evidence Form 1: Dataset summaries cataloging key statistics including timeframe, geography, demographic subgroup representations, extent of missing data, and other salient descriptive attributes, analysed separately for training, validation and testing data partitions.

Evidence Form 2: Data labelling process documentation detailing protocols, tools, human labeller qualifications, oversight methods, and measured accuracy/inter-rater reliability metrics demonstrating reliability and trustworthiness of label assignments for supervised learning approaches with manually tagged observations.

Evidence Form 3: Model input data dictionaries explicitly enumerating the specific data variables ingested by model development environments, traced directly to their source collection protocols and legal usages rights.

DS 5.2.5 Handling

Evidence Form 1: Data storage and transmission security plan detailing technical safeguards, access controls, encryption requirements, transmission protocols, backup and redundancy provisions across data infrastructures spanning collection, intermediate processing, and model development environments.

Evidence Form 2: Data handling standards compliance checklist mapping implemented controls and organisational policies to relevant jurisdiction, industry, or institutional information security regulations and guidelines. Provides auditable confirmation of adherence.

Evidence Form 3: Secure data workflow diagrams analysing end-to-end infrastructure vulnerabilities, mapping data types to security functions, explicitly documenting protections in place through each processing, storage, transmission and analytics stage as training data traverses enterprise environments between source and usage.

DS 5.3 Model development

Evidence Form 1: Model development methodology technical report detailing all data pre-processing, algorithm and architecture selection rationales, training run configurations, parameter optimisation sequences, performance evaluation increments, infrastructure resources leveraged, and technical development team credentials applied in creating candidate model versions.

Evidence Form 2: Model version lineage diagram tracing the descended genealogy of model variants from initial prototypes through interim evaluation-informed refinements to selection of final candidate models using version control nomenclature and linking antecessor connections.

Evidence Form 3: Model training data sample sets and output result sets provided to enable external reconstruction and verification of reported performance claims regarding predictive accuracy, robustness, and comparisons against alternative approaches using independently controlled evaluation methods.

DS 5.3.1.1 The supplier shall document the proposed interaction between end-users and the product, which could include "human‐in‐command", "human‐on‐the‐loop", "human‐in‐the‐loop".

Evidence Form 1: User interface workflow diagrams illustrating intended sequences of user decisions, system behaviors, data flows, and explanatory outputs across manual and automated stages according to explicitly designated function allocations between human and machine capabilities.

Evidence Form 2: Cognitive task analysis report enumerating required situational assessments, option identification, predictive inferences, goal prioritizations, information integration demands, and types of system transparency necessary at key decision gates where human judgment satisfies ethical, legal or performance requirements exceeding system proficiencies.

Evidence Form 3: Applied human factors engineering artifacts including screen layouts, interaction paradigms, input response mappings, and user guides embodying and reinforcing the foundational model of joint human-AI teaming during design implementations.

DS 5.3.1.2 Suppliers shall document their bias in the stages of model design, development, and deployment by evidencing what they see as a model's risk profile versus alternatives. The description shall conform to good practice reporting guidelines.

Evidence Form 1: Algorithmic auditing report assessing discriminatory, unfair, opaque, hacked/gamed or otherwise insufficiently robust outcomes that could emerge from reliance on the model, mapped to mitigations.

Evidence Form 2: Comparative error proclivity analyses quantifying false positive and false negative likelihoods across key subpopulations contrasting model against available solutions to establish relative risk profiles.

Evidence Form 3: Research ethics pre-screen memorandum reviewing proposed development methodology through lenses of representative stakeholder concerns, institutional review protocols, and technical accountability standards governing sensitive model purposes.

DS 5.3.1.3 The supplier shall document the methods used to develop the AI model(s) in the product. The description shall follow good practice reporting guidelines.

Evidence Form 1: Model development methodology technical report detailing all data pre-processing, algorithm and architecture selection rationales, training run configurations, parameter optimisation sequences, performance evaluation increments, infrastructure resources leveraged, and technical development team credentials applied in creating candidate model versions.

Evidence Form 2: Encapsulated software environment installation package with dedicated configurations to wholly reproduce runtime build dependencies, code packages, data loaders, architecture specifications and hyper parameters enabling reconstruction of an identical model genesis environment by external auditors.

Evidence Form 3: Model training lineage activity log capturing ordered time-sequenced entries of executed run profiles with hardware configurations, data and random number seeds, output metrics and code versioning stamps enabling chronological tracing of incremental development.

DS 5.3.1.4 The supplier shall document which training data elements/variables were used to develop the model [assuming that it is not all of the data originally collected (see 5.2.4)]. Where data are excluded, the supplier shall document the process and rationale for removal.

Evidence Form 1: Model input data dictionary enumerating the specific structured, unstructured and derived types mapped to each authorised raw and processed variable consumed with complete definitions of values/content, encoding standards, and allowable operations.

Evidence Form 2: Input data summarisation report profiling combined aggregated statistics across numerical, categorical, text, image and time-series elements from the unified assembly of training set variables included versus those collected originally per data Inventory.

Evidence Form 3: Input data selection methodology exposition describing any differential filtering, dimensionality reduction, or complete variable removal operations applied to the collected data inventory when deriving the subset of features deliberately fed to model training algorithms alongside technical justifications.

DS 5.3.2.1 Input variable data flow

Evidence Form 1: End-to-end data flow diagrams tracing lineages of all input data variables from origin sources through upstream collection, partitioning, storage, retrieval and staging phases into final model development environments.

Evidence Form 2: Master input data inventories with metadata detailing protected statuses, allowable usages, governing approvals, and applicable constraints following each atomic data element through successive transformations across full life cycles from raw capture through model ingestion.

Evidence Form 3: Signed data licensing agreements between external contributing organisations and model development entity authorizing specific data elements for narrowly defined modelling usage under strict privacy, sensitivity and ethical protections as detailed in contract clauses.

DS 5.3.2.2 Outcome variables

Evidence Form 1: Output data dictionary defining each allowable variable the model is designed to predict, recommend, classify or otherwise produce, specifying precise technical definitions, possible values with semantics, evaluation metrics, and usage constraints.

Evidence Form 2: Model output validation dossiers demonstrating empirically measured alignment between each output variable and its corresponding authentic healthcare constructs within target application context, rooting predictions securely to genuine needs and outcomes.

Evidence Form 3: Semantic ontologies formally specifying relationships between output and input variables grounded in knowledge representations codifying the theoretical, experimental, and experiential foundations authorising inferred linkages.

DS 5.3.2.2.1 If the model has output variables related and/or relevant to a medical outcome, the supplier shall document the output variables.

Evidence Form 1: Output data dictionary defining any predicted diagnoses, recommended treatments, triaged referral levels, prescribed interventions, or other clinical decisions produced by the model with precise specifications and usage constraints.

Evidence Form 2: Validation dossiers demonstrating measured model output alignments with authentic medical outcomes within applicable clinical contexts, preventing deviations into irrelevant or unreliable variables.

Evidence Form 3: Clinical care protocols delineating the allowable medical decisions and caregiver workflows for the target applications, establishing lexical and semantic grounds for constraining output variables to relevant, useful, and safe healthcare outcomes.

DS 5.3.2.2.2 The supplier shall provide evidence of the validity of the output variable(s) to the healthcare need identified in Clause 4.

Evidence Form 1: Clinical relevance consultations capturing external subject matter expert feedback assessing output variables for plausibility, significance, and usefulness in addressing authentic medical needs within intended care contexts.

Evidence Form 2: Quantitative variable-need alignment analyses statistically demonstrating strengths of relationship between output predictions and prioritised healthcare gaps cited in background needs analysis captured in Clause 4 substantiating product inception.

Evidence Form 3: Model output evidence provenance diagrams linking validators, evaluation methods, validation datasets, performance metrics, and results 1) demonstrating clinical applicability of outputs and 2) tracing outputs backward along data lineages to original need.

DS 5.3.2.2.3 If surrogate or proxy output variable(s) for the healthcare need are used, the supplier shall provide evidence of the strength of association of the surrogate to the target outcome.

Evidence Form 1: Predictive causality analysis quantitatively establishing strength of empirical association between surrogate outputs and ultimate healthcare outcomes of interest, grounded in statistical correlations measured within applicable domain contexts.

Evidence Form 2: Literature review citing published medical evidence linking selected surrogate endpoints to gold standard clinical decision variables across ranges of related contexts, providing theoretical anchors for model outputs lacking direct validation data.

Evidence Form 3: Expert judgement documentation eliciting external evaluations from qualified subject matter authorities regarding plausibility and feasibility for using proposed surrogate proxies based on clinical experience and medical knowledge.

DS 5.3.3.1 The supplier shall document the AI/ML algorithm(s) used in the model (e.g. name of learning algorithm, model architecture and code packages, where appropriate)

Evidence Form 1: Model development methodology technical report providing precise names and version numbers of all commercial, open-source, proprietary or custom-developed machine learning libraries, packages, toolkits, frameworks, and extensions forming algorithms applied during model genesis workflows.

Evidence Form 2: Mathematical specification sheets formally defining the theoretical foundations, equation derivations, computational logics, optimization procedures and probabilistic semantics underpinning the essential mechanisms of learning, generalization and inference encoded within adopted algorithmic techniques.

Evidence Form 3: Encapsulated software environment installation package with dedicated configurations to wholly reproduce runtime build dependencies, code packages and library versions enabling reconstruction of an identical algorithmic training harness by external auditors.

DS 5.3.3.2 The supplier shall document and justify the process of handling missing data, if applicable.

Evidence Form 1: Missing data analysis quantification report profiling all dimensions of incomplete observations within training data including concentrations, patterns, variable correlations, demographic skews, and hypothesised causal factors behind absences.

Evidence Form 2: Data imputation methodology exposition technically detailing all mathematical formulas, data substitutions, simulations, generation methods and theoretical assumptions instituted in pre-processing steps to prepare incomplete training data for model consumption.

Evidence Form 3: Model training run validation reviews assessing downstream impacts of missing data treatments by comparatively evaluating pipeline iterations using deletion, imputation and trimmed datasets to optimise overall integrity.

DS 5.3.3.3 The supplier shall document and justify the sample size used to train the model.

Evidence Form 1: Statistical power analysis scientifically calculating minimum required training observations across stratified output variable categories and input feature distributions formally needed to technically support performance claims within quantified confidence bounds.

Evidence Form 2: Training set assembly methodology narrative contextualizing incremental construction decisions to augment initial core samples with additional target environment-specific expansions according to mixture modeling, bootstrap aggregation and multi-phase reinforcement learnings from interim evaluation epochs.

Evidence Form 3: Budgetary specification sheets detailing all fixed infrastructure expenditures, compute service charges, and estimated labor costs for algorithm tuning and model iteration encompassed within total resourcing levels formally provisioned and approved for model development.

DS 5.3.3.4 The supplier shall document how independent training data sets and testing data sets (i.e. holdout data sets) were separated in model development.

Evidence Form 1: Data handling protocols formally delineating authorised uses, restrictive bindings, and segmentation rules applied to subdivide aggregated training data corpus into disjoint isolated sets for specialised model development phases.

Evidence Form 2: Data partitioning conformity audit validating correct assignment and restrictions of individual data elements into training, evaluation and holdout sets per established data handling protocol specifications.

Evidence Form 3: Chained cryptographic custody ledgers timestamping one-way hashes of training data partitions as they were constructed, signed and transmitted into isolated development environments, irrefutably attesting separation without compromising privacy.

DS 5.3.3.5 If used as part of model development, the supplier shall document the following: a) data pre-processing procedure(s); b) hyper parameter selection, optimisation and thresholding; and c) internal validation procedure(s).

Evidence Form 1: Model tuning methodology technical report detailing all data normalisation, feature selection, weighting adjustments, algorithm hyper parameter optimisations, configuration profiling, cross-validations, and evaluation sequencing conducted during training harness refinement.

Evidence Form 2: Model training provenance diagrams tracing indexed model version lineages across iterative tuning cycles back to parent configurations, luck seeds, sub-training sets, and performance benchmarks aggressively evolved towards improved generalisation.

Evidence Form 3: Encapsulated software environment installation package with dedicated configurations to wholly reproduce runtime build dependencies, code packages, data loaders, and hyper parameter settings enabling reconstruction of incremental training by external auditors.

DS 5.3.4.1 The supplier shall document the process of constructing the final model of the product, where relevant.

Evidence Form 1: Model selection methodology technical brief detailing the rating criteria, performance benchmarks, qualification protocols, and evaluative procedures applied to rank candidate models produced during the training pipeline and select the best fitting final model.

Evidence Form 2: Model user test reports validating robustness, consistency and stability of the final model across multiple predictive simulation runs using stratified sampling from full variational scope of expected inputs after training cessation.

Evidence Form 3: Model lineage diagram tracing the descended genealogy of model variants from initial prototypes through interim evaluation-informed refinements to the selection declaration of the overall highest performing survivor as the final model.

DS 5.3.4.2 The supplier shall document and justify the use of any decision thresholds that convert probabilities into discrete outputs in the final model.

Evidence Form 1: Decision threshold report detailing the rationale for selecting specific thresholds, including statistical analyses and impact assessments on model outputs.

Evidence Form 2: Sensitivity analysis results demonstrating the effects of varying decision thresholds on model performance, accuracy, and reliability.

Evidence Form 3: User feedback and expert opinions correlating decision threshold levels with practical and clinical relevance in real-world applications.

DS 5.3.5 The supplier shall evaluate the final model with a holdout data set, i.e., one that has not been used in training the model.

Evidence Form 1: Independent validation report using a holdout dataset, including detailed performance metrics (e.g., accuracy, sensitivity, specificity).

Evidence Form 2: Comparative analysis showing the model's performance on training versus holdout datasets to demonstrate the model’s generalisability.

Evidence Form 3: Documentation of external peer review or audit of the model testing process and outcomes by independent experts.

DS 5.3.5.2 The supplier shall document the performance of the product using appropriate metrics for the proposed task using testing data.

Evidence Form 1: Comprehensive performance metrics report including accuracy, sensitivity, specificity, positive and negative predictive values, calibration, and area under the curve (AUC).

Evidence Form 2: Graphical representations (e.g., ROC curves, calibration plots) visually demonstrating the model’s performance across various metrics.

Evidence Form 3: Comparative performance analysis against established benchmarks or similar models in the field, providing contextual understanding of the metrics.

DS 5.3.5.3 The supplier shall document and justify the choice of performance and safety metrics used as part of the model testing.

Evidence Form 1: Justification report outlining the rationale for selecting specific performance and safety metrics, including relevance to clinical outcomes and user needs.

Evidence Form 2: Stakeholder (e.g., clinicians, patients) feedback reports on the perceived importance and relevance of chosen metrics.

Evidence Form 3: Literature review or expert consensus documents supporting the use of the chosen metrics for the specific healthcare application.

DS 5.3.5.4 The supplier shall document exploration for bias undertaken in model training and testing and document any bias mitigation strategies introduced.

Evidence Form 1: Bias assessment report detailing findings from analysis of model performance across diverse demographic groups.

Evidence Form 2: Documentation of bias mitigation strategies employed during model training and testing, including algorithmic adjustments or dataset diversification.

Evidence Form 3: Post-deployment performance reports highlighting ongoing monitoring of bias and effectiveness of implemented mitigation strategies.

DS 5.3.6 The supplier shall document the nature and sources of data used in the testing of the final model.

Evidence Form 1: Comprehensive data inventory listing all datasets used in testing, including sources, nature, and volume of data.

Evidence Form 2: Data provenance and processing logs detailing the journey of each dataset from acquisition to testing.

Evidence Form 3: Ethical compliance certificates or reports for data use, ensuring adherence to privacy laws and consent guidelines.

DS 5.3.6.1 The supplier shall ensure and document that the data used for testing is representative of the intended use environment.

Evidence Form 1: Representativeness analysis report comparing testing data characteristics with those of the intended use environment.

Evidence Form 2: Expert review or consensus statement on the appropriateness and representativeness of the testing data.

Evidence Form 3: Statistical analyses demonstrating the alignment of testing data demographics, conditions, and scenarios with real-world settings.

DS 5.3.7 The supplier shall evaluate and document the performance of the model in the intended use environment.

Evidence Form 1: Field performance report detailing model efficacy, accuracy, and reliability in the actual use environment

.Evidence Form 2: User feedback and case studies demonstrating the model's practical impact in real-world healthcare settings.

Evidence Form 3: Continuous performance monitoring logs showing the model’s adaptability and stability over time in the use environment.

DS 5.4.1 The supplier shall ensure and document that the model outputs are clinically relevant and meaningful.

Evidence Form 1: Clinical relevance report establishing the significance of model outputs in healthcare decision-making.

Evidence Form 2: Feedback from healthcare professionals on the practical utility and clinical applicability of the model outputs.

Evidence Form 3: Case studies or clinical trials demonstrating how model outputs have impacted patient care and outcomes.

DS 5.4.2 The supplier shall ensure that the outputs of the model are transparent and interpretable to users.

Evidence Form 1: User interface screenshots or demos showing clear, understandable model outputs.

Evidence Form 2: User training and documentation materials explaining the interpretation of model outputs.

Evidence Form 3: Feedback from users (e.g., clinicians) on their experiences and challenges in understanding and using model outputs.

DS 5.4.3 The supplier shall provide training to users to ensure effective and safe use of the product.

Evidence Form 1: Training module outlines and content, tailored to different user groups.

Evidence Form 2: User competency assessments and feedback on the training program.

Evidence Form 3: Post-training evaluation reports demonstrating users’ proficiency and confidence in using the product.

DS 5.5 The supplier shall have a documented process for updating the model and managing different versions of the model.

Evidence Form 1: Version control log detailing each update, the reasons for the changes, and the impacts on model performance.

Evidence Form 2: Update protocol documents outlining the process for initiating, testing, and deploying model updates.

Evidence Form 3: Stakeholder communication records, including notifications of updates and feedback on the impacts of these changes.

DS 5.5.1. The supplier shall document how new data and insights are incorporated into model updates.

Evidence Form 1: Data integration reports showing how new datasets or insights are assimilated into the model.

Evidence Form 2: Impact assessment analyses detailing the effects of new data on model performance and decision-making.

Evidence Form 3: Documentation of ethical and legal considerations addressed when integrating new data.

PC 5.5.2 The supplier shall continuously monitor the performance of the model post-deployment and document the findings.

Evidence Form 1: Continuous monitoring reports including performance metrics, user feedback, and incident logs.

Evidence Form 2: Periodic performance review summaries highlighting trends, challenges, and areas for improvement.

Evidence Form 3: Corrective action records demonstrating responses to performance issues or user concerns.

PC 5.6 The supplier shall ensure and document compliance with all relevant regulatory requirements for the product.

Evidence Form 1: Regulatory compliance checklist and certification documents relevant to the product.

Evidence Form 2: Audit reports from regulatory bodies or third-party auditors confirming compliance.

Evidence Form 3: Risk management and mitigation documentation in line with regulatory standards.

PC 5.7 The supplier shall prioritise and document measures taken to ensure the safety of patients and users of the product.

Evidence Form 1: Safety protocol documents outlining measures and guidelines for protecting patient and user safety.

Evidence Form 2: Incident reports and response strategies relating to safety concerns.

Evidence Form 3: Feedback from healthcare professionals and patients on safety aspects of the product.

PC 5.8 The supplier shall document and address ethical considerations relevant to the product, including patient consent and data privacy.

Evidence Form 1: Ethical review reports detailing considerations such as patient consent, data privacy, and fairness.

Evidence Form 2: Consent forms and privacy policies demonstrating adherence to ethical standards.

Evidence Form 3: Stakeholder engagement records discussing ethical implications of the solutions

VALIDATION

PC 6.1 The supplier shall engage with relevant stakeholders throughout the product lifecycle to ensure the product meets user and patient needs.

Evidence Form 1: Stakeholder engagement logs detailing meetings, communications, and feedback throughout the product lifecycle.

Evidence Form 2: Documentation of how stakeholder input has been incorporated into the product design and updates.

Evidence Form 3: Stakeholder satisfaction surveys and reports assessing the alignment of the product with user and patient needs.

DA 6.2 The supplier shall ensure transparency in the development process and be accountable for the product’s performance.

Evidence Form 1: Development process documentation providing detailed insight into the model design, data used, and decision-making processes.

Evidence Form 2: Publicly available product performance reports and accountability statements.

Evidence Form 3: Incident response and rectification records demonstrating accountability in addressing product performance issues.

DS 6.3 The supplier shall commit to continuous learning and improvement of the product based on performance data and user feedback.

Evidence Form 1: Continuous improvement logs showing ongoing updates, enhancements, and modifications based on data and feedback.

Evidence Form 2: User feedback and performance data analysis reports driving product improvements.

Evidence Form 3: Documented reviews and updates of learning protocols and development algorithms in response to emerging data and trends.

DA 6.4 The supplier shall implement robust data governance practices to ensure data quality, security, and compliance with regulations.

Evidence Form 1: Data governance policy documents outlining practices for data quality, security, and compliance.

Evidence Form 2: Audit reports on data management practices verifying adherence to governance standards.

Evidence Form 3: Incident logs related to data breaches or issues, along with response and remediation actions taken.

DA 6.5 The supplier shall conduct comprehensive risk management to identify, assess, and mitigate risks associated with the product.

Evidence Form 1: Risk assessment reports detailing identified risks, impact assessments, and mitigation strategies.

Evidence Form 2: Risk management protocol documentation showing systematic approaches to risk identification and mitigation.

Evidence Form 3: Historical logs of identified risks and the effectiveness of implemented mitigation strategies over time.

PC 6.6 The supplier shall have a clear plan for the product’s end-of-life, including decommissioning and data handling.

Evidence Form 1: End-of-life plan document outlining strategies for decommissioning, data migration, and user transition.

Evidence Form 2: Stakeholder communications on end-of-life plans, ensuring transparency and preparation.

Evidence Form 3: Post-decommissioning report analysing the process, challenges, and lessons learned.

____

DEPLOYMENT

PC 7.1 The supplier shall document the deployment process of the product, ensuring it is consistent with the intended use and user needs.

Evidence Form 1: Deployment plan detailing the steps, timelines, and considerations for rolling out the product.

Evidence Form 2: User training and support materials provided at the time of deployment.

Evidence Form 3: Post-deployment feedback reports from users, confirming the product meets the intended use and user needs.

PC 7.2 The supplier shall establish a system for collecting and addressing user feedback continuously.

Evidence Form 1: User feedback collection system documentation, including methods and frequency of data collection.

Evidence Form 2: Summary reports of user feedback, highlighting key issues and responses.

Evidence Form 3: Change logs showing how user feedback has led to product modifications or improvements.

PC PS 7.3 The supplier shall monitor and document the product's performance in real-world settings, ensuring it aligns with the expected outcomes.

Evidence Form 1: Real-world performance monitoring reports, including data on efficacy, reliability, and user satisfaction.

Evidence Form 2: Comparative analysis of expected versus actual performance metrics in real-world settings.

Evidence Form 3: Case studies or user testimonials illustrating the product's impact in real-world scenarios.

____

MONITORING

PC 8.1 The supplier shall conduct post-market surveillance to monitor the safety and effectiveness of the product after release.

Evidence Form 1: Post-market surveillance plan detailing the methodologies and frequency of monitoring.

Evidence Form 2: Surveillance reports summarizing findings on safety and effectiveness.

Evidence Form 3: Incident reports and corrective actions taken in response to post-market surveillance findings.

PC 8.2 The supplier shall ensure ongoing compliance with evolving regulatory requirements and document any changes made to maintain compliance.

Evidence Form 1: Documentation of regulatory updates and revisions to the product to maintain compliance.

Evidence Form 2: Audit reports from regulatory bodies or third-party auditors verifying ongoing compliance.

Evidence Form 3: Change logs and impact assessments related to compliance updates.

DS 8.3 The supplier shall maintain the integrity and security of data used and generated by the product throughout its lifecycle to decomissioning.

Evidence Form 1: Data security and integrity protocols, including measures for protection against breaches and unauthorised access.